Data Architecture, Integrity and Segmentation

Create the data foundation for ECL through source mapping, segmentation logic, reconciliations, exceptions, and readiness checks that reviewers can trust.

Data architecture is part of the methodology

Data quality is often discussed as a technical dependency, but in ECL it is also a methodological dependency. Weak source mapping distorts segmentation, delinquency logic, default identification, cure treatment, calibration, and movement analysis. By the time the allowance is challenged, the problem no longer looks like a systems issue. It looks like a credibility issue.

That is why data architecture belongs inside the core ECL framework rather than at the edge of it.

Start with source mapping and field discipline

Teams should know where contract data, payment history, days-past-due measures, restructurings, write-offs, recoveries, collateral fields, customer identifiers, and macroeconomic inputs originate. They should also know which transformations happen before those inputs reach the model layer.

A working data map should answer simple but critical questions:

- which source system owns each key field

- how each field is defined and transformed

- where reconciliations are performed

- how missing, stale, or conflicting values are escalated

Segmentation is the bridge between data and estimation

Segmentation should not be driven only by operational convenience. Portfolios need to be grouped according to shared risk characteristics, behavioural similarity, and the practical ability to estimate and explain loss behaviour. If two exposure sets react differently to delinquency, macro stress, recoveries, or restructuring, keeping them together may make the resulting allowance less meaningful even if the extraction process becomes easier.

Good segmentation improves both model fit and management understanding.

Reconciliations and exceptions must stay visible

Few institutions have a perfect source environment. The objective is therefore not to pretend the source data is flawless. The objective is to make weaknesses visible, controlled, and proportionate. That means clear exception logs, reconciliation checks to the ledger or sub-ledger where relevant, and documented treatment for data gaps that might influence staging, model inputs, or disclosures.

Reviewers are more comfortable with an imperfect data environment that is openly governed than with a seemingly polished dataset whose limitations are unclear.

What controlled data readiness looks like

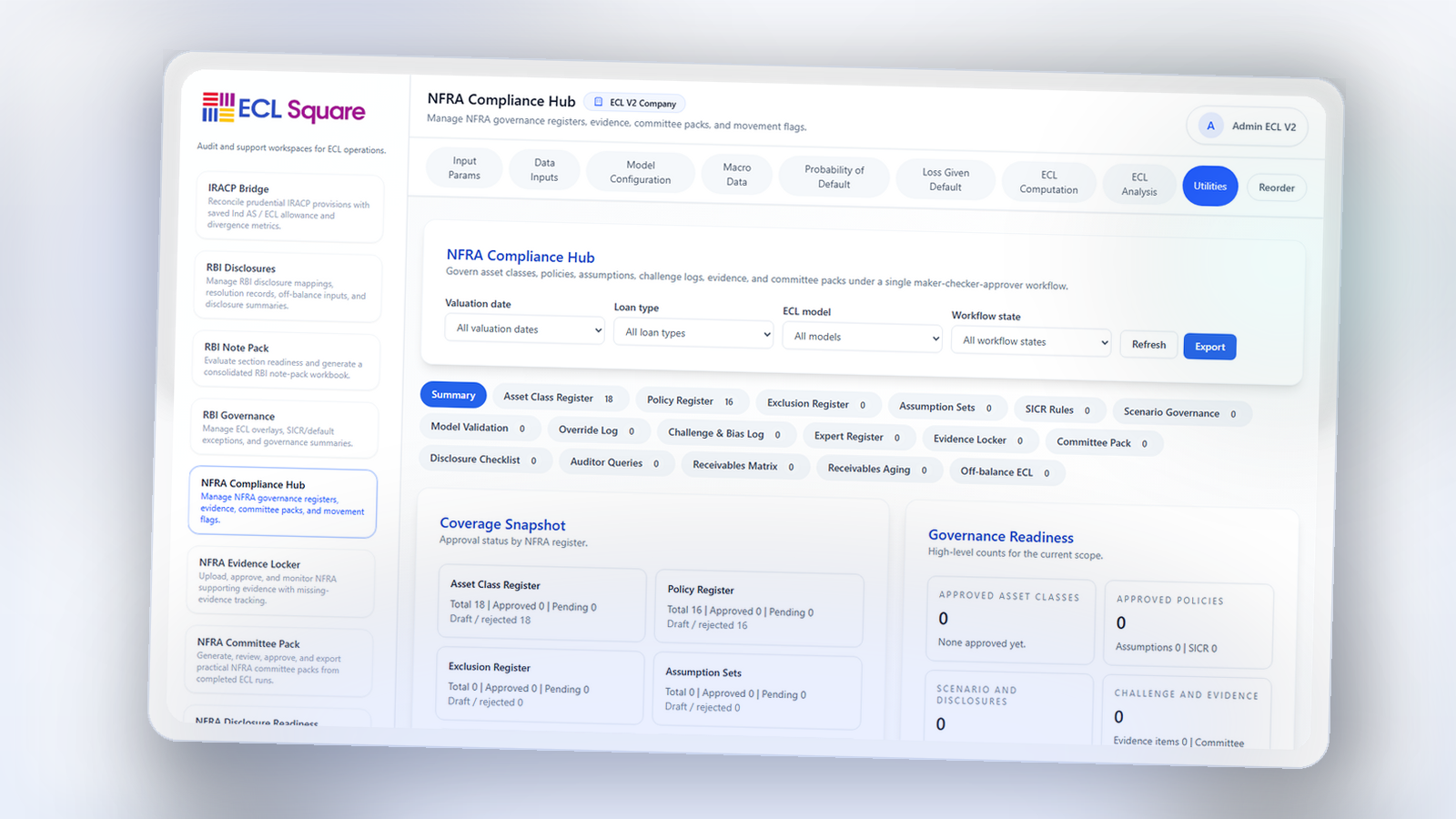

By the time this module is working properly, the team should be able to show how raw portfolio data becomes ECL-ready information, how exposures are assigned to segments, how key fields are validated, and how unresolved issues are carried into review and sign-off. That is the point where a platform like ECL Square becomes valuable: it can preserve lineage, surface exceptions, and connect data preparation to the later stages of the allowance process.

Data quality is often discussed as a technical dependency, but in ECL it is also a methodological dependency. Weak source mapping distorts segmentation, delinquency logic, default identification, cure treatment, calibration, and movement analysis. By the time the allowance is challenged, the problem no longer looks like a systems issue. It looks like a credibility issue.