Move through the article with a clear review map.

Use the contents as a quick scan before going into the full article. The sections preserve the article's own structure and link directly to each discussion area.

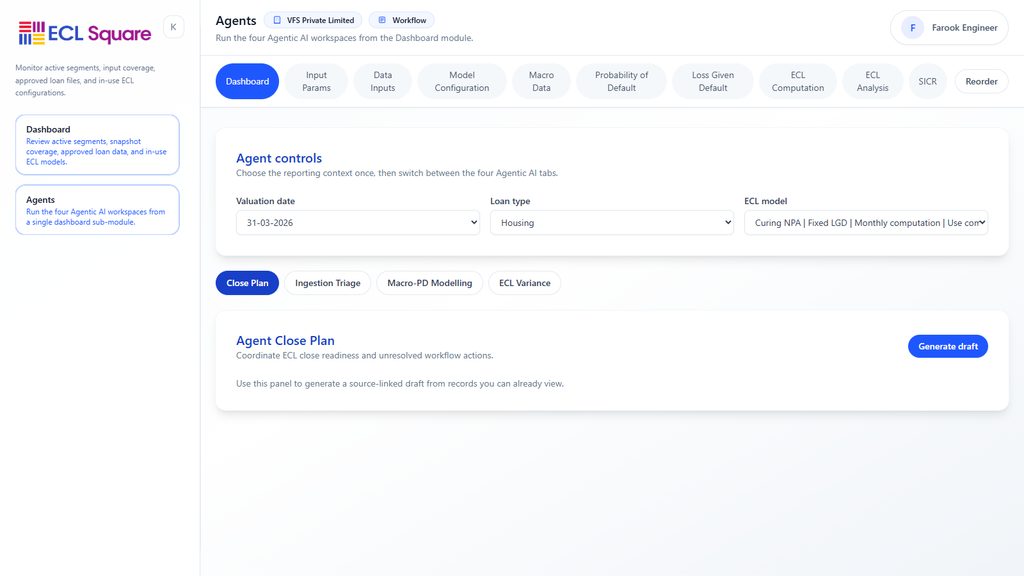

Designing the system backbone that turns Expected Credit Loss from a conceptual framework into a scalable, controlled and repeatable operating capability

Use the spreadsheet scorecard to assess manual dependency across data intake, SICR, model assumptions, overlays, controls, and reporting packs.

Compare platform optionsUse the contents as a quick scan before going into the full article. The sections preserve the article's own structure and link directly to each discussion area.

An Expected Credit Loss framework can be elegant on paper and still fail in practice if the technology architecture beneath it is weak. Policies may be clear. Models may be well designed. Governance may be sound. Yet if data arrives late, staging logic lives in disconnected spreadsheets, scenario sets are updated manually, overlays are tracked outside controlled systems, and reporting requires multiple fragile file bridges, the institution will struggle to run ECL with consistency, speed and confidence. This is why technology architecture deserves a pillar of its own. It is the operating backbone of the impairment framework.

Technology in ECL is not only about automation. It is about control, traceability, scalability and explainability. A strong ECL engine does more than calculate a reserve. It ingests source data, preserves lineage, applies segmentation, runs staging logic, executes models, stores scenarios, records overrides, manages workflows, supports approvals, reconciles outputs and produces reporting views that tie cleanly into finance and disclosure. It creates a governed environment in which expected loss can be measured repeatedly without rebuilding the process every period.

This matters because ECL is one of the most operationally demanding estimates in financial reporting. It combines data from multiple systems, relies on different measurement approaches across portfolios, requires forward-looking scenario inputs, involves stage classification, may include overlays and post-model adjustments, and feeds accounting entries, movement analysis and disclosures. A spreadsheet-driven process may function for a time, especially in smaller institutions or early-stage implementations. But as portfolios grow, products diversify and governance expectations rise, the architecture becomes a limiting factor. Manual workarounds multiply. Audit trails weaken. Version confusion increases. Close cycles become stressful. The institution spends more time assembling the estimate than understanding it.

A mature institution responds by designing an ECL engine as a coherent architecture, not a loose collection of scripts, files and review calls.

This article explores that architecture in depth: what an ECL engine really is, what functional layers it should contain, how data pipelines and rule engines should be structured, where model execution fits, how scenarios and overlays should be governed technologically, how workflow and approval layers matter, how ledger and disclosure integration should be handled, and what common design mistakes cause ECL systems to become operationally brittle.

Expected Credit Loss is not a single calculation. It is a recurring process made up of multiple linked processes.

Data must be sourced.Balances must be reconciled.Portfolios must be segmented.Stages must be assigned.Models must run.Scenarios must be selected and applied.Overlays may need to be assessed.Outputs must be reviewed and approved.Entries must be posted.Disclosures must be prepared.

Each of these activities may be manageable on its own. The challenge is their interaction. ECL is an orchestration problem as much as a modelling problem. Technology architecture matters because it determines whether that orchestration is stable or fragile.

A strong architecture reduces dependency on manual stitching. It ensures that the same inputs produce the same outputs under controlled conditions. It preserves evidence. It shortens close cycles. It enables explanation. Most importantly, it lets the institution focus management attention on risk interpretation rather than operational rescue work.

An ECL engine is not merely a model-execution tool. It is a controlled system environment that supports the end-to-end measurement and reporting of expected credit loss.

A mature ECL engine typically includes:

data ingestion and staging,portfolio mapping and reference data handling,segmentation logic,staging and SICR logic,model execution for relevant portfolios,scenario and parameter management,manual adjustment and overlay capture,workflow, approvals and audit trail,accounting output preparation,and reporting and disclosure support.

This broader definition is important because many institutions believe they have an ECL engine when they really have only a calculation module. If data, scenario selection, overrides, overlays and reporting still happen outside the tool, the engine is only partial. That may still be workable, but it should be recognised honestly.

One of the most common technology mistakes is to let software structure drive methodological design. Institutions buy or build tools first and then contort their ECL policy to fit the tool.

A stronger approach works in the opposite direction. The institution first defines:

what portfolios exist,what measurement approaches apply,what staging logic is required,what controls and approvals are needed,what accounting and disclosure outputs are expected.

Only then should the technology architecture be designed to support that framework.

This matters because ECL is deeply institution-specific. Portfolio mix, data maturity, governance standards and reporting needs differ widely. A good architecture supports the framework the institution actually needs rather than imposing a generic process that happens to be system-convenient.

A useful way to think about ECL technology is as a set of layers. Each layer performs a distinct role and interacts with the others through controlled interfaces.

A strong ECL architecture often includes:

a source and ingestion layer,a standardised ECL data layer,a rules and transformation layer,a modelling and calculation layer,a scenario and adjustment layer,a workflow and governance layer,and a reporting and integration layer.

This layered approach matters because it keeps the system intelligible. If every rule, assumption and workflow is mixed into one opaque processing block, change control becomes difficult and troubleshooting becomes costly. Layering helps separate data from methodology, methodology from governance, and governance from reporting.

An ECL engine is only as good as the systems it can reliably draw from.

Common source systems may include:

core lending platforms,trade receivable or ERP systems,collections systems,collateral or valuation systems,rating or risk systems,general ledger systems,treasury systems,CRM or servicing platforms,and external macroeconomic data feeds.

The architecture should define:

which source is authoritative for each critical field,how frequently data is pulled,how snapshot timing is controlled,how identifiers are aligned,and how exceptions are handled when fields conflict or are missing.

This matters because many ECL problems that look like modelling problems are really source integration problems. If the system cannot reliably assemble the exposure universe, no amount of model sophistication will create a robust process.

A mature architecture usually benefits from a standardised ECL data layer, sometimes thought of as a canonical data model or controlled impairment mart.

This layer should translate diverse source-system structures into a consistent impairment-ready format. It may contain:

exposure records,customer identifiers,product mappings,stage indicators,default and cure fields,collateral links,segment assignments,risk grades,cash flow schedules,historical performance fields,and scenario mapping keys.

The value of this layer is immense. It creates a common language for the ECL process. Without it, every model or report may need to interpret source fields separately, increasing inconsistency and operational risk. With it, the institution can build rules, models and reporting on a stable foundation.

The ECL process contains many rules that are not statistical models but are nevertheless material. Examples include:

product-to-portfolio mapping,segment assignment,days-past-due logic,default flags,SICR thresholds,stage overrides,materiality thresholds,write-off classifications,and re-entry rules for modified accounts.

These rules should ideally be externalised from hard-coded scripts where possible and stored in governed configuration layers or rule tables. This makes them easier to review, approve, version and update.

A weak architecture hides important business rules inside code fragments or manual files. A stronger one makes them visible and governable.

Because stage assignment is central to ECL and often blends quantitative and qualitative triggers, it benefits from structured rule-engine design.

The architecture should support:

automated SICR tests where appropriate,use of delinquency backstops,integration of internal ratings or behavioural scores,watchlist and restructuring inputs,override capture,and clear reason coding for stage outcomes.

This matters because stage classification is often one of the most sensitive parts of the process. If the system cannot show why an exposure is in a given stage, or if overrides happen outside the controlled workflow, both explainability and auditability weaken quickly.

A mature ECL engine therefore treats stage logic as a first-class system capability rather than a side effect of model execution.

Most institutions do not use one single ECL method across all portfolios. The technology architecture should therefore support multiple methodology families where needed.

These may include:

PD-LGD-EAD models for loan books,provision matrices for trade receivables,roll-rate or vintage logic for selected portfolios,discounted cash flow modules for individually assessed assets,contingent exposure logic for guarantees,and low-complexity rules for immaterial or low-risk financial assets.

This means the calculation layer should be modular. It should allow portfolio-specific methods to coexist within one governed reporting architecture. A one-method engine forced across all portfolios often results either in methodological compromise or heavy manual supplementation.

Forward-looking information is too important to be managed casually. A mature ECL engine should support structured scenario management.

This may include:

storage of scenario versions,mapping of macro variables to portfolios or model inputs,scenario weighting controls,effective dates,comparison to prior scenarios,approval status,and links to the final model run.

Why does this matter Because scenario changes can materially alter the allowance. If scenario sets are shared through uncontrolled files or email attachments, it becomes much harder to prove which assumptions drove a given reporting period. A proper scenario layer brings traceability and control to one of the most judgment-sensitive elements of the framework.

Many institutions run the core model inside a system but maintain overlays in separate spreadsheets or presentations. This creates a weak point in the architecture.

A stronger design captures overlays and post-model adjustments in a controlled layer that supports:

rationale,scope,quantification,approvals,version history,period-to-period tracking,and linkage to final reporting output.

This does not mean every judgment must be automated. It means the architecture should preserve the governance and reporting impact of those judgments. If material adjustments sit outside the engine, the engine cannot claim to represent the actual final impairment process fully.

An ECL engine should support the operational workflow of the close. This includes not only calculation, but state transitions such as:

data received,data validated,stage rules applied,model run completed,exceptions reviewed,overlay proposed,management review complete,final output approved,journal posting released.

This workflow capability is valuable because it turns control steps into visible process states. It reduces the risk of late uncontrolled changes and helps preserve audit trail. A system that calculates well but provides no workflow control often forces governance back into email chains and manual trackers.

A mature ECL architecture should preserve audit trail automatically where possible.

This includes:

who changed a rule,when a scenario was uploaded,which version of the model ran,who approved an overlay,what output file became final,when a stage override was entered,and what journal file was generated.

This matters because ECL scrutiny often depends on sequence. Reviewers want to know not just what the final outcome was, but how the process moved from input to output. An architecture that captures this naturally is far stronger than one that relies on reconstructed evidence after the close.

Real ECL processes generate exceptions: missing data, failed validations, unusual balances, override requests, outlier exposures, delayed valuations, unresolved reconciliations.

A strong architecture should support exception handling through controlled mechanisms such as:

flags,queues,reason codes,issue ownership,temporary treatment approval,and closure tracking.

This prevents the process from degrading into informal workarounds. It also helps the institution learn where recurring data or process weaknesses exist.

The same engine should ideally support different reporting views from one controlled data and output chain.

These may include:

risk management views,finance posting views,movement analysis,stage disclosures,portfolio dashboards,validation packs,board summaries,and audit support reports.

This does not mean one identical report for everyone. It means one governed reporting architecture feeding multiple views. If different teams extract their own versions from uncontrolled sources, consistency weakens rapidly.

The ECL engine should not stop at a final reserve number. It should support a clear handoff into accounting.

This may involve:

reserve-by-portfolio outputs,movement files,journal-entry support files,write-off and recovery classification support,reconciled balances,and links to disclosure data.

The goal is not necessarily full straight-through posting in every institution, though that may be desirable. The goal is controlled and reconciled integration. Finance should be able to trace booked numbers back to approved model output without depending on ad hoc translation work each period.

An architecture that works for a small pilot portfolio may fail under growth.

Scalability questions include:

Can the engine handle more productsCan it support more frequent closesCan new stages, rules or scenario dimensions be added without redesignCan portfolio-specific methods be added without breaking the reporting chainCan historical runs be stored and queriedCan more users interact with it under proper access control

These questions matter because ECL rarely remains static. The institution may add geographies, products, new regulatory expectations or more refined controls. A brittle architecture becomes a strategic bottleneck.

Because ECL contains sensitive data and judgment-heavy adjustments, user roles matter.

The architecture should define who can:

view data,change mappings,enter overrides,update scenario sets,propose overlays,approve final outputs,release reporting packs.

Role-based access is not only an IT concern. It is part of control design. It supports maker-checker discipline, reduces the risk of unauthorised changes and strengthens audit readiness.

Several recurring design weaknesses appear in practice.

One is using multiple disconnected spreadsheets as the primary processing environment, which makes control and lineage fragile.

Another is mixing source data logic, business rules and final reporting adjustments in the same files, creating opacity.

A third is keeping scenarios and overlays outside the controlled system, even though they materially influence the allowance.

A fourth is building only a calculation engine without workflow or approval support.

A fifth is lacking a standardised ECL data layer, forcing repeated reinterpretation of source fields.

A sixth is treating exception handling informally, which turns recurring weaknesses into manual habits.

A seventh is producing different outputs for risk, finance and disclosure through separate extraction logic, weakening consistency.

These failures are not only operational annoyances. They often become governance and audit weaknesses as the framework matures.

Consider two institutions with similar ECL methodology.

The first institution runs core calculations from a spreadsheet model, imports balances manually from several systems, applies stage overrides in a separate file, manages overlays in PowerPoint-backed memos and prepares journal entries through a finance workbook. The process works, but only because a small group of experienced people knows the sequence.

The second institution uses a standardised ECL data layer, controlled rule tables, workflow-based scenario approval, integrated model execution, in-system overlay capture, issue queues and reconciled output files for finance and disclosures.

Both institutions may produce similar allowance numbers for a time. But the second institution can scale, explain, audit and repeat the process with much greater confidence. The difference is architectural maturity.

A strong institutional architecture usually includes:

clear source-system integration,a standardised ECL data layer,governed business-rule management,modular support for multiple methodologies,dedicated staging and scenario layers,controlled overlay capture,workflow and approval states,audit trail,exception handling,reporting outputs for risk and finance,and systematic ledger integration.

The strength of this architecture lies in turning ECL into an operating platform rather than a recurring assembly exercise.

Technology architecture for an ECL engine is ultimately about making the impairment framework executable with discipline. It gives the institution the ability to move from concept to controlled repetition. It ensures that data arrives in a structured form, that rules are visible, that models run in a governed environment, that scenarios and overlays are captured properly, that workflows are controlled, that outputs are traceable and that accounting and reporting integration are reliable. It reduces dependence on institutional memory and increases dependence on designed process.

A strong institution does not ask only whether it has an ECL tool. It asks whether the architecture supports the full impairment lifecycle with enough control, scalability and transparency to make the framework sustainable. It understands that the best ECL engine is not necessarily the one with the most impressive interface or the most complex model library. It is the one that keeps the institution in control of data, judgment, workflow and reporting as the framework evolves.

In that sense, this pillar teaches a practical truth about ECL execution: methodology becomes durable only when architecture can carry it.

Use the spreadsheet scorecard to assess manual dependency across data intake, SICR, model assumptions, overlays, controls, and reporting packs.

Compare platform optionsHow an institution should set up its overall ECL framework: scope, governance model, ownership, timelines, review cadence, and the link between finance, credit risk, data, and compliance teams.

How assets are grouped for assessment, how homogeneous pools are identified, and why segmentation is the foundation of a meaningful ECL estimate.

The data required for ECL, including contractual data, behavioural data, default history, recovery data, collateral records, write-offs, restructuring information, and macroeconomic data.

The importance of default definitions, alignment with regulatory concepts where relevant, cure logic, probation periods, and treatment of credit-impaired assets.

Significant Increase in Credit Risk, qualitative and quantitative indicators, rebuttable presumptions, backstop rules, watchlist use, restructuring triggers, and governance over stage migration.

Start with the article topic, or move straight into data readiness, SICR, scenarios, overlays, disclosures, or platform control.